The AI boom is pushing memory demand well beyond high-bandwidth memory (HBM). Low-power DRAM is now under pressure too, with shortages emerging as chip developers including Nvidia, Qualcomm, and Tesla adopt LPDDR in next-generation processors, according to Chosun Biz.

A single AI server rack scheduled for release in 2026 could require LPDDR capacity equivalent to thousands of smartphones. The scale of that demand is already triggering aggressive procurement among handset manufacturers.

LPDDR is no longer a mobile-only technology. It is becoming core AI infrastructure. Memory suppliers are responding by prioritizing AI customers, squeezing availability across the broader market.

Samsung Electronics, SK Hynix, and Micron are all seeing stronger LPDDR pricing power — mirroring the gains HBM suppliers enjoyed as demand shifted toward higher-margin applications.

Vera Rubin platform highlights scale of demand

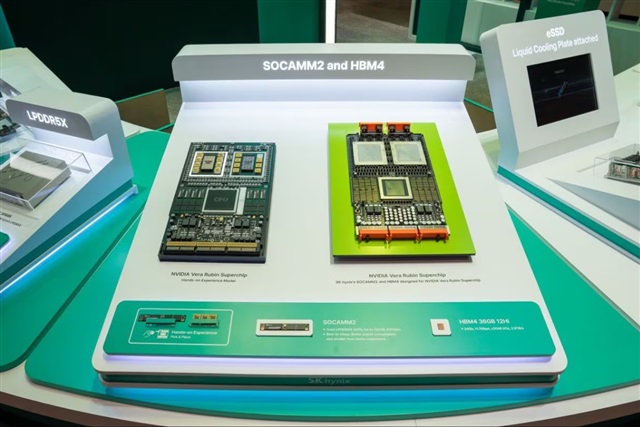

At the center of the demand surge is Nvidia's Vera Rubin platform. It integrates Vera CPUs and Rubin GPUs in a unified architecture combining LPDDR5X and HBM4.

Each Vera CPU supports up to 1.5TB of LPDDR5X through eight 192GB SOCAMM2 modules. At the rack level, that adds up to more than 50TB — roughly the memory capacity of 4,500 premium smartphones at 12GB per device.

AI chips are acting as a "black hole" for low-power DRAM, absorbing supply at an accelerating pace. Shortages are expected to intensify in the second half of 2026 as Vera Rubin ramps up.

SOCAMM2 emerges as new memory layer

Underpinning the shift is SOCAMM2, a server-optimized LPDDR5X module built for AI chips. Memory suppliers have begun ramping related products, positioning it as an intermediate layer between HBM and traditional system memory.

SOCAMM2 stacks LPDDR dies closer to processors. The result is higher bandwidth and lower latency than DDR-based alternatives, with lower power draw than conventional server memory.

The architectural shift depends heavily on packaging advances. Vera Rubin uses TSMC's CoWoS technology, connecting CPU, GPU, and memory through high-speed interconnects rather than standard board-level configurations.

Nvidia has reportedly added Nanya Technology to its supply chain, in collaboration with TSMC. The move aims to diversify LPDDR sourcing beyond the dominant South Korean suppliers. It would also mark the first time a Taiwanese memory maker has entered Nvidia's AI server primary memory ecosystem.

Prices surge as smartphone supply tightens

The supply crunch is already rippling through downstream markets. Memory vendors are steering supply toward higher-margin AI customers, leaving smartphone makers in a difficult position.

Samsung Electronics and Apple's recent LPDDR5X negotiations illustrate the pressure. Prices reportedly nearly doubled — from just above US$30 in early 2025 to around US$70 in early 2026. Apple is said to have accepted the terms to lock in supply.

Contract prices for LPDDR4X and LPDDR5X rose about 90% quarter-on-quarter in the first quarter of 2026, the steepest increase on record.

Demand broadens across AI chipmakers

Nvidia is not alone. Tesla's next-generation autonomous driving chip, AI5, is expected to carry 192GB of LPDDR5X per chip. Qualcomm's upcoming AI200 platform is designed to support up to 768GB of LPDDR per card.

Cristiano Amon recently met with major memory suppliers in South Korea, a move widely seen as an effort to secure a tightening LPDDR supply.

As AI workloads scale across cloud and edge environments, LPDDR is set to become a critical tier in next-generation memory hierarchies — moving from mobile devices into the heart of the data center.

Credit: Sherri Wang

Article edited by Jerry Chen