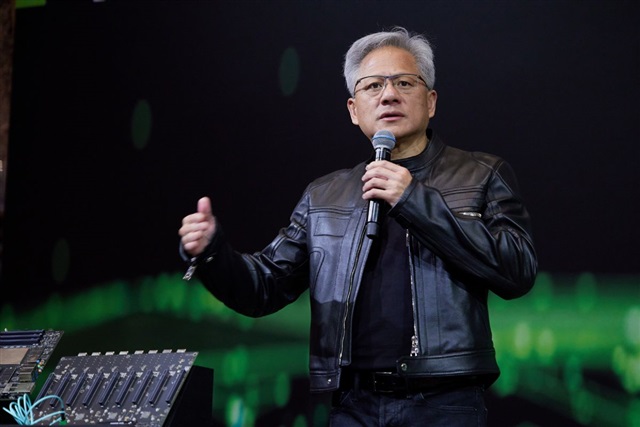

In September 2025, Nvidia CEO Jensen Huang made a rare joint livestream appearance with Intel CEO Pat Gelsinger to announce a US$5 billion equity investment in Intel. In March 2026, Nvidia followed up with a US$2 billion investment in Marvell Technology. Why...

The article requires paid subscription.

Subscribe Now