Samsung Electronics is reportedly preparing to allocate much of its Pyeongtaek P4 cleanroom capacity to next-generation high-bandwidth...

SK Hynix disputed reports that it is considering shifting DRAM investment at its new M15X memory production base in Cheongju, South Korea,...

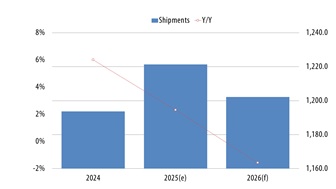

Samsung Electronics and SK Hynix are both riding a historic memory upcycle, but a profit gap of about KRW15 trillion (approx. US$10 billion)...

Samsung is accelerating one of its most aggressive memory capacity buildouts in years, aiming to bring its Pyeongtaek Line 4 fab, or P4,...

SK Hynix showcased its latest high-bandwidth memory (HBM) technologies at TSMC's North America Technology Symposium 2026, highlighting...