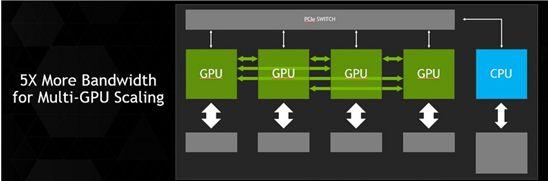

Nvidia has announced that it plans to integrate a high-speed interconnect, called NVLink into its future GPUs, enabling GPUs and CPUs to share data five to 12 times faster than they can today.

This will eliminate a longstanding bottleneck and help pave the way for a new generation of exascale supercomputers that are 50-100 times faster than today's most powerful systems, the vendor said.

Nvidia will add NVLink technology into its Pascal GPU architecture - expected to be introduced in 2016 - following Nvidia's new Maxwell compute architecture for 2013. The new interconnect was co-developed with IBM, which is incorporating it in future versions of its Power CPUs.

With NVLink technology tightly coupling IBM Power CPUs with Nvidia Tesla GPUs, the Power data center ecosystem will be able to fully leverage GPU acceleration for a diverse set of applications, such as high performance computing, data analytics and machine learning.

Today's GPUs are connected to x86-based CPUs through the PCI Express (PCIe) interface, which limits the GPU's ability to access the CPU memory system and is four- to five-times slower than typical CPU memory systems. PCIe is an even greater bottleneck between the GPU and IBM Power CPUs, which have more bandwidth than x86 CPUs. As the NVLink interface will match the bandwidth of typical CPU memory systems, it will enable GPUs to access CPU memory at its full bandwidth.

Samsung HKMG DDR5

Samsung Electronics has expanded its DDR5 DRAM memory portfolio with a 512GB DDR5 module...

Photo: Company

Nvidia GeForce RTX 30 series GPUs

Nvidia's GeForce RTX 30 series GPUs are powered by the company's Ampere architecture. The...

Photo: Company

Apple HomePod mini

Apple's HomePod mini is the newest addition to the HomePod family. At just 3.3 inches tall,...

Photo: Company

Apple 13-inch MacBook Pro with Magic Keyboard

Apple has updated the 13-inch MacBook Pro with the new Magic Keyboard for an improved typing...

Photo: Company

Apple iPad Pros

Apple's new iPad Pros comes with the latest A12Z Bionic chip, an ultra-wide camera, studio-quality...

Photo: Company

- Musk says chip capacity will decide winner of AI race (Mar 21) - EE Times

- Google taps MediaTek for cheaper AI chips (Mar 17) - The Information

- European project gets $260 million for HPC chip sovereignty (Mar 6) - EE Times

- The trouble with MAGA's chipmaking dreams (Mar 3) - Economist

- Automotive chips: Gloom and doom or boom by 2030? (Feb 14) - EE Times

- Deepseek is more Wall Street than Silicon Valley (Feb 3) - Culpium, by Tim Culpan

- Tech CEOs try to reassure Wall Street after DeepSeek shock (Jan 30) - Wall Street Journal

- TSMC to make chips for cryptominer Bitdeer at new US fab (Jan 17) - Culpium, by Tim Culpan

![]() Foxconn unit tests digital health strategy

Foxconn unit tests digital health strategyFoxconn has been promoting its "3+3+3" innovation strategy, positioning digital health as a core element...

![]() Trump and Intel

Trump and IntelHowever, Intel's continued financial struggles led to the replacement of former CEO Pat Gelsinger with Lip-Bu Tan, who recently found himself a target...

Humanoid robotics, 2025 - Market trends, critical components & strategic shifts

DIGITIMES believes that due to the high cost of hardware components, humanoid robots can hardly see rapid adoption across various...

Innolux deployments with Pioneer acquisition

Innolux to invest NT$33.7 billion to acquire Pioneer, a move driven by three key strategic initiatives to expand its automotive...

South Korea panel maker business status

South Korea's two major panel makers have made progress in restructuring their businesses and will continue to strengthen OLED...